With the internet having well and truly taken over the way the world works and lives, a lot of individuals have begun using it to their advantage to market and generate revenue.

Big data is widely being seen as the future of computing and marketing because of the vast treasure troves of information available on the internet. It has emerged in other industries as well. In fact, a great contribution of big data in healthcare has been discovered as it helps in early disease detection and keeping track of details for a more effective health assessment. Every individual leaves a digital footprint on their computers every time they transact, and this helps companies learn more about these individuals.

By understanding these individuals, companies will be able to market products based on their preferences and drive a higher chance of purchase for the same. Big Datas and Hadoop are technologies which enable the collection and collation of customer data. Thus, a Big data and Hadoop certification qualify you to work for a company breaks down and analyses datasets.

What is Hadoop?

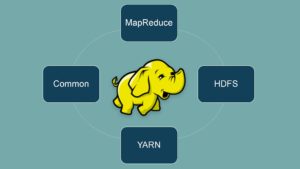

Hadoop is simply a set or a group of programs and procedures, all open-sourced, that act as the backbone of any Big data structure and operations. These programs can be modified and edited by anyone, which is why they have the open-source tag along with them. They are essentially broken down into four different modules.

Once a person completes the Hadoop training and data analytics courses, they will be able to create data structures that carry out particular tasks and helps in bettering the data analytics

These modules include:

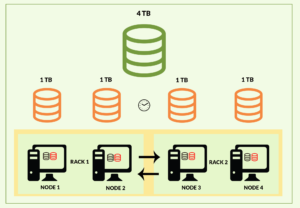

A distributed file-system

The distributed file system allows the data to be stored in a format that is easily accessible. The data is stored in large devices that are linked. The MapReduce is a suite that provides the basic tools with which you will be able to learn about Hadoop and its working.

A computer stores its data using a method known as the “file system,” which means it can be used. This is generally determined with the computer’s OS, but the Hadoop system has its own system which is above the computer’s OS. What this essentially does is make it easier to access using any computer on any supported OS.

MapReduce

The MapReduce module carries out the basic operations such as reading the data from the database and putting it in a suitable format that can be analysed. It can also be used for producing math operations that can help in enriching the customer database

Hadoop Common

The other module that Hadoop offers is the Hadoop Common that provides Java tools that are needed in a user’s computer system that runs Windows, Unix etc. These tools help in reading whatever data is saved in the Hadoop file system

YARN

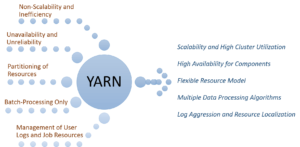

The last module in the big datas certification course is YARN that allows the user to manage the resources of the system that runs the data analysis.

There are lots of other procedures that are present as well and have come to become a part of the Hadoop framework. The principle four modules, however, are the ones mentioned above, and with them, you will be able to begin your journey into data analytics.